Daily’s developer platform powers audio and video experiences for millions of people all over the world. Our customers are developers who use our APIs and client SDKs to build audio and video features into applications and websites.

Today, as part of AI Week, we’re launching a Python SDK for integrating AI into WebRTC video and audio sessions.

Our kickoff post for AI Week goes into more detail about what topics we’re diving into and how we think about the potential of combining WebRTC, video and audio, and AI. Feel free to click over and read that intro before (or after) reading this post.

This year at Daily, we’ve been spending a lot of time with AI tools. We’ve helped customers build features using Large Language Models like GPT-4, image generation tools like DALL-E, and several kinds of smaller, specialized models.

Today we’re excited to unveil daily-python, a server-side toolkit which lets developers build just about any application that combines real-time video and audio with the functionality of AI models and services.

Building real-time experiences that leverage AI

The impressive capabilities of today’s AI tools open up many new possibilities for video calls and other real-time video and audio experiences.

For example, using LLMs, other generative AI tools, computer vision models, and audio processing libraries, it’s now possible to:

- Perform “speech-to-speech” translation between different languages

- Create virtual, synthetic characters that can interact conversationally

- Add an LLM assistant into any video call

- Automatically flag content — video, audio, and text — that violates moderation guidelines

- Configure an LLM to manage a multi-player, interactive game

- Identify items on-screen during a live stream (for example, for live shopping)

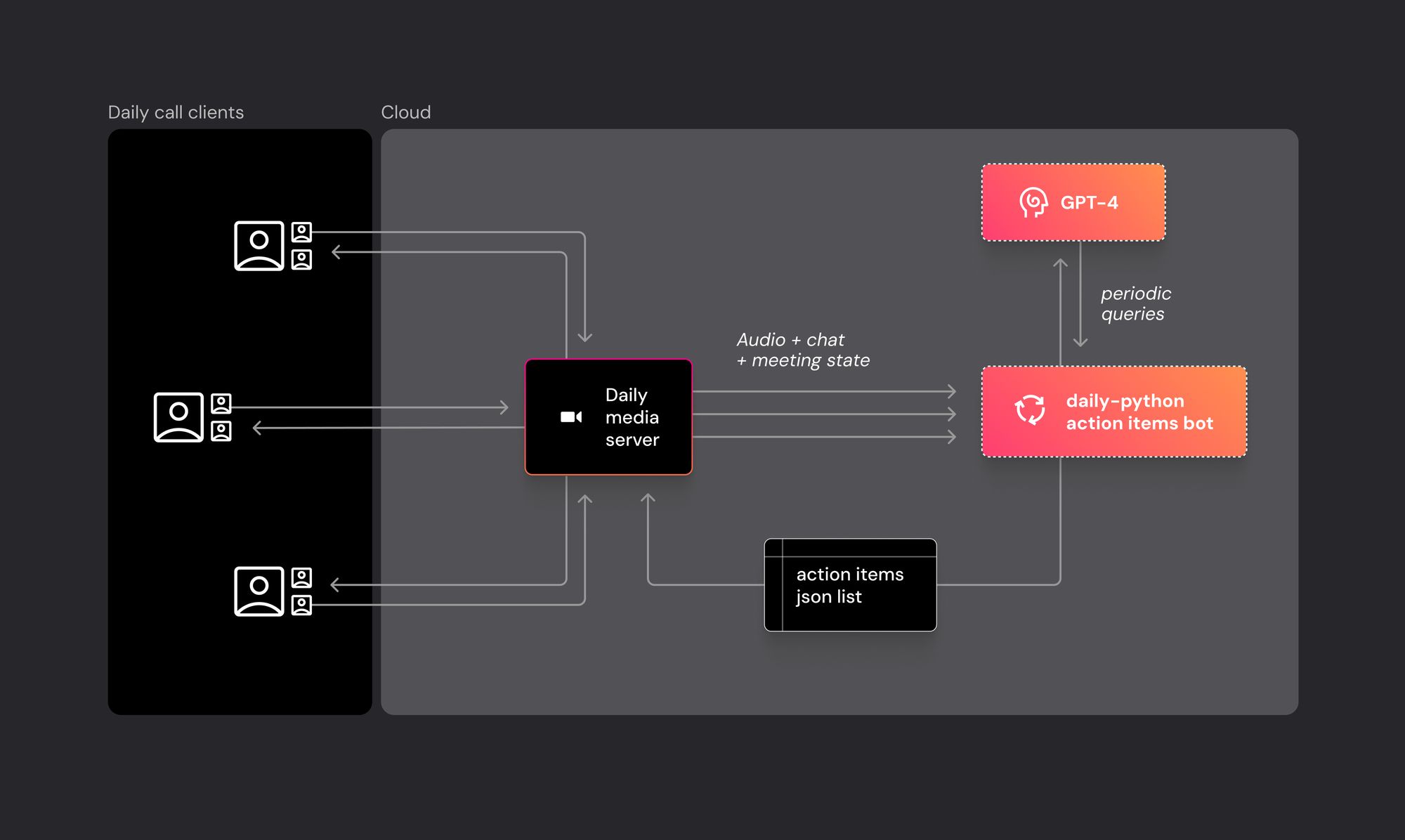

The Daily Python SDK is designed to support these use cases and others like them. You can write daily-python code that runs in the cloud, connects to a Daily session, and pulls real-time video, audio, and events data streams from the session. Those data streams can be fed into AI data-processing pipelines. You can also send video, audio, and events into the session from your daily-python code.

Python is a ubiquitous language and is a de facto standard for AI and machine learning development. daily-python code runs equally well on a Microsoft Azure VM, a Cerebrium application, an AWS Lambda function, your desktop machine, popular IoT platforms, and even in a colab notebook.

When combined with our REST APIs, daily-python is a flexible toolkit for building just about any application that combines WebRTC video and audio with AI models and services.

Architecture and usage

daily-python is built on top of a core Rust and C++ codebase that is shared across our SDKs. This core gives us a high-performance foundation underneath the Python code, which handles media processing, networking, and state management. This shared core also means that all of our SDKs, including daily-python, work in a similar way. So if you’ve written code using, for example, daily-ios or daily-android, daily-python’s mechanics should feel familiar.

We designed daily-python to run in “headless” environments (for server-side use cases). But you can also run daily-python code interactively – for example, in a notebook, a terminal app, a gtk app, or even with old-school tkinter. Our goal is for daily-python to run almost anywhere you can run Python code. Today we support Linux (x86_64 and aarch64) and macOS (Apple Silicon).

Bridging between a real-time WebRTC context and common AI tools and services is also a central design goal of daily-python. See the /demos directory in the repository for a growing collection of sample code.

Getting started with daily-python is usually as straightforward as doing a

pip install daily-pythonand then writing code to create a local session client object and join a Daily meeting URL

from daily import *

Daily.init()

client = CallClient()

client.join("https://my.daily.co/meeting", meeting_token = "MY_TOKEN")In general, the mental model for using daily-python is that you’re writing a headless bot, which connects to a Daily room in much the same way as a human participant, and then does data-centric things with video, audio, and events streams.

Here’s a conceptual diagram showing a daily-python application that keeps track of action items during a team meeting.

Our favorite thing about what we do at Daily is that we get to see all sorts of amazing things that developers create with the tools we’ve built. The AI world is evolving very quickly. New possibilities are emerging almost every week as new models are released and developers show off new applications. So we’re particularly looking forward to seeing how all of you use daily-python.

Check out the daily-python repository and docs. And, as always, join us on Discord, and find us online or IRL at one of the events we host.