Today we’re introducing APIs and cloud infrastructure for creating new kinds of experiences in live streaming and cloud video recording.

Daily’s Video Component System (VCS) is a developer toolkit that lets you build dynamic recordings and multi-participant live streams. Software developers can turn video calls into high-quality streaming experiences complete with dynamic graphics overlays, or embed production studios within their apps with all the complex processing handled in the cloud.

With VCS developers can easily build:

- Dynamic text overlays

- Animated graphics

- Programmable layouts

- Video rounded corners and outlines

- Event-driven layout changes

- Output to RTMP, HLS, or MP4

VCS encompasses both a set of baseline compositions — which are Daily's default collection of compositions — as well as the VCS SDK, our open sourced version of the baseline composition you can customize even more. We've built the VCS Simulator, where you can try out the baseline composition's options to see if it fits your needs. Or you can dive into the VCS SDK docs for more customization.

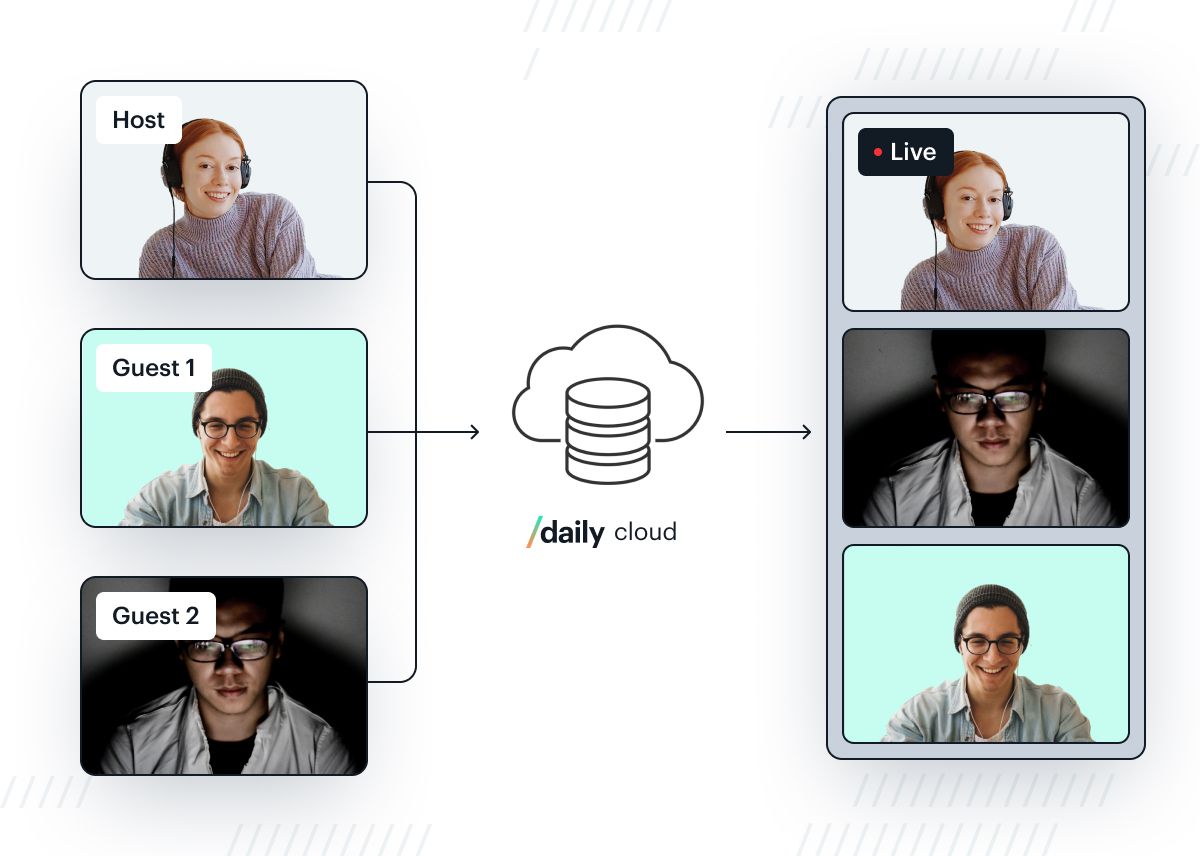

At Daily, we build video for a collaborative world. Everything starts with the assumption that video can be rendered and produced on any number of devices, like a video call. People may join or leave and their production roles may change, even during a live stream. (This is very different from traditional live streaming products that assume one fixed stream origin. We have a post on live streaming architecture with lots of details on the state of the art.)

Daily’s VCS is a cloud-native architecture for video recording and streaming. You can use the VCS open source toolkit to design video compositions and dynamic motion graphics. (It is written in React-based rendering language so many front-end developers will find it very familiar. Your client-side app itself does not need to use React to use VCS, though.)

Your compositions and graphics can then execute on Daily’s cloud servers but also directly within your client apps, so you can provide instant synchronized previews or per-user customizations of the same video output.

And thanks to Daily’s world-class video infrastructure, it scales up seamlessly whether your stream has one active participant or hundreds. Without changing your rendering code, you can simultaneously record video to cloud storage, stream over WebRTC with low latency to up to 15,000 participants, and/or stream to millions of people on popular live video platforms.

At this point, let me introduce myself as the author of this post. My name is Pauli Olavi Ojala. I’m a software engineer at Daily and the lead on the VCS project. I’m stoked about this opportunity to share with you how we think about VCS and what kind of products it can enable!

I’ll tell you about the “how” a bit later, but first let’s talk about the “why.”

What you can build — including use cases like edtech, events, and live commerce

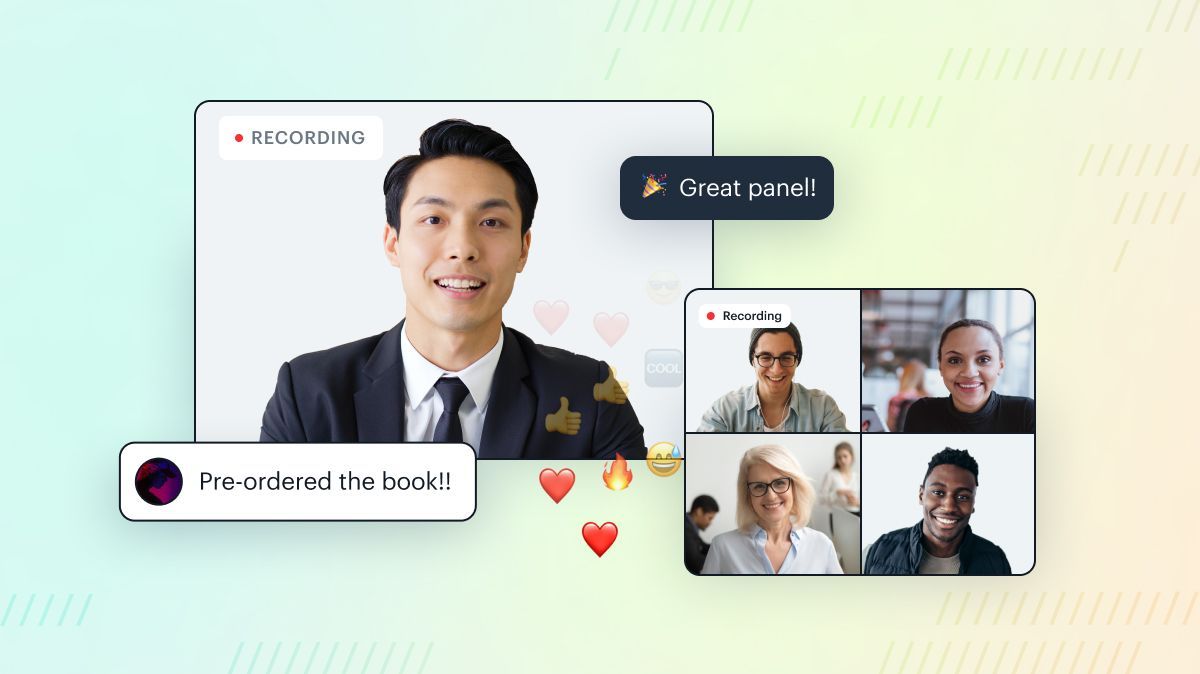

We always hear from customers that they want high production values in live streaming and cloud recording. As their product user interfaces are carefully designed, customers want an equal level of control over how live streamed and recorded video is rendered, too.

Live streams and recordings should become more dynamic and engaging, with options to create custom branding, titles, animated reactions, novel visual layouts, and so on — all this without taxing end-user device performance.

I made this one-minute showreel to give you an idea of what’s possible with VCS:

With VCS, you can:

- Render WebRTC-based video calls to live streams (RTMP, HLS etc.) and cloud-based recordings (MP4) using custom “compositions" — i.e. cloud-executable programs that define dynamic layouts and graphics.

- Video modes like grids, multi-way nested splits, picture-in-picture; with exact control over positioning, labels and ordering.

- Overlays like captions, custom images, transitions, titles, and automatically animated notification pop-ups

- Style video layers with rounded corners and vector graphics borders

- Use our new open source React-based SDK to design custom components that encapsulate smart rendering behavior, then deploy to Daily’s cloud-based media pipeline and/or embed inside your apps.

- Active and stateful components like animated graphics, countdown timers, audience poll results, social media comment queues, etc.

- Automatic live captioning with smart placement within a video layout

- Complex dynamic titles with flowing text layouts

- Create a distributed live stream production studio.

- Offload rendering work from end-user devices to Daily’s cloud

- Design applications with multiple production roles that can all share a “universal rendering” view of the output stream

- Create custom player front-ends with a separate local rendering of the stream using the same video call data

Let’s talk potential products:

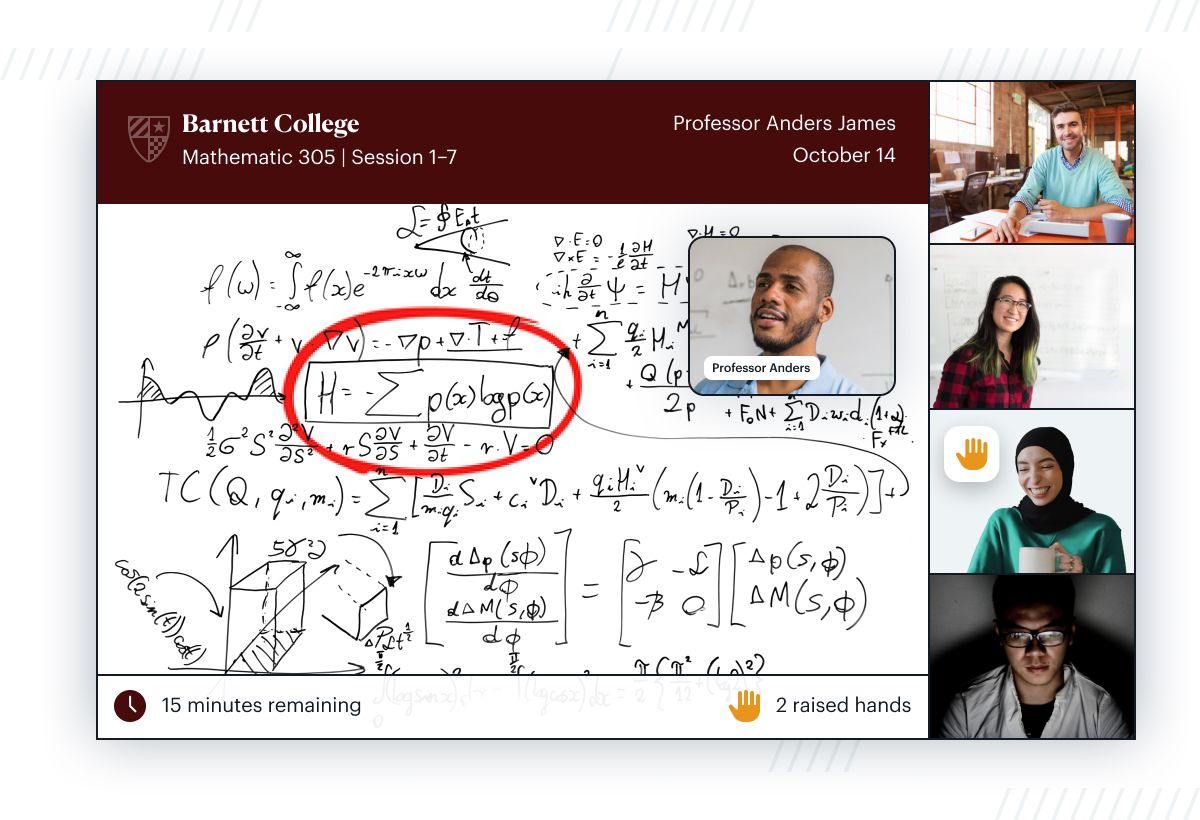

Edtech developers can integrate whiteboards and features essential to online class learning. Interactive elements like "raise your hand" or “Q&A” help students and teachers feel more connected, as participant activity becomes visible in the stream/recording so it’s not just one person talking.

Event developers can bring audience presence to a new level by tapping into the “buzz” of online participants. For example, easily integrate social media comments. It's also easy to style video layouts to apply your branding and polish the event’s look and feel — in this screenshot, the live presenter videos are rendered into circular masks, with high-quality vector graphics outlines.

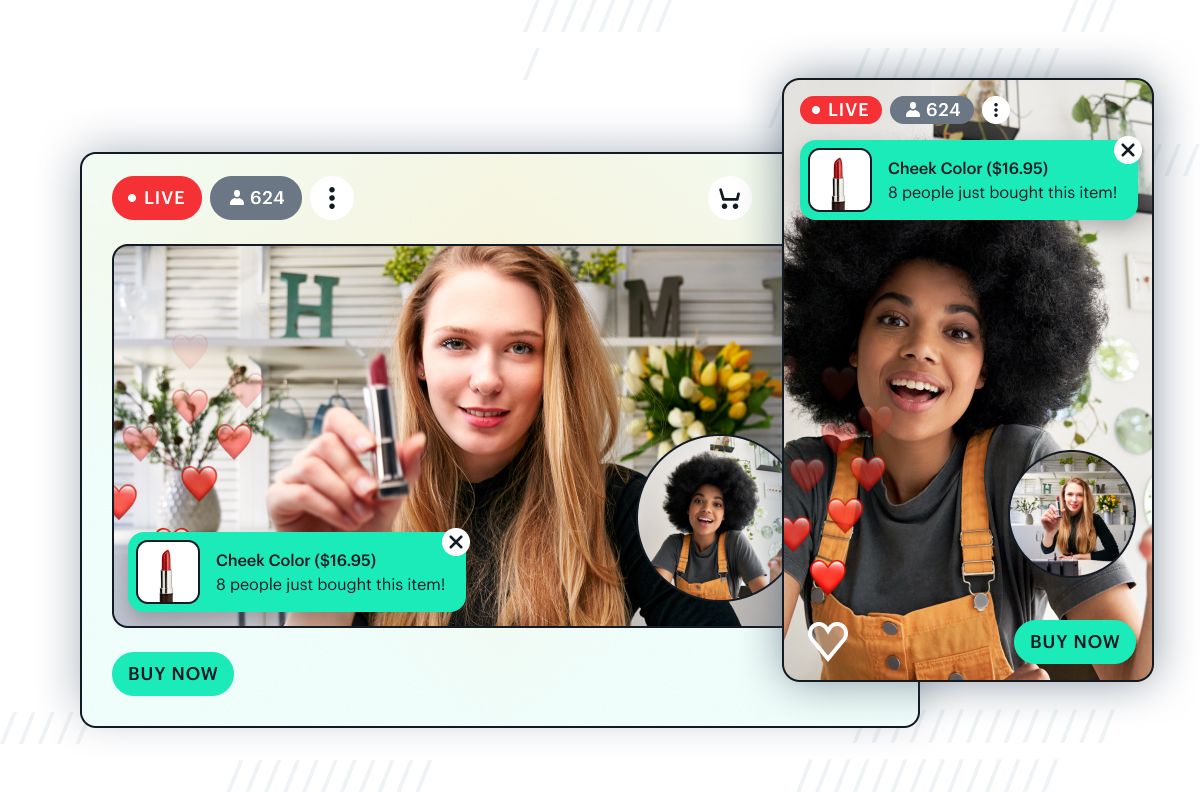

Build live commerce at scale, without lag and chat delays. Daily supports 15,000 participants in a real-time call, so you can create large-scale experiences for fans and community. The same call can seamlessly render into a conventional live stream (Facebook/YouTube/etc.) for millions of passive viewers. Thanks to VCS, all these participants and viewers can get the same rich social experience, with dynamic elements like "toasts” to keep the stream’s visuals hopping with buyer activity.

Pricing

There are no additional charges for using VCS, when building live streams and cloud recordings with Daily. Live streaming and cloud recording are priced based on minutes. Learn more at our pricing page.

The VCS vision and opportunity

Now that we’ve seen some of the possibilities, I’d like to tell you more about what goes on under the surface, and where we at Daily see it leading.

When designing VCS capabilities, I’ve been using a mental model of a three-rung “ladder of complexity” for developing cloud-based streaming applications. In order of increasing ambition level, it goes like this:

- Make cool stuff happen in your stream.

- Make cool stuff happen automatically.

- Make cool stuff happen everywhere.

(“Cool stuff” here is a highly scientific shorthand that encompasses the previously listed bullet-point features like custom video call layouts, animated graphics overlays, automatic captions, dynamic visual assets, social content inserted into streams, etc.)

VCS enables all of these. You can start at the first rung and climb up as your product needs grow.

Make cool stuff happen in your stream

On rung one, we’re doing all this rendering in the cloud and you’re driving it over Daily’s API. Your application sends messages to directly control what gets displayed. If you need it, there are highly granular controls over specific aspects of rendering (the ordering of participants, visual parameters like margins and positions, etc.) but we provide sensible default behavior to get your application up and running quickly. We also offer a visual development tool called the VCS Simulator that lets you build these API messages rapidly.

Make cool stuff happen automatically

On rung two, it’s still happening in the cloud, but you can plug in your own rendering logic. Your code can now run directly in the cloud and make active, stateful decisions about rendering. (The advantage of this model becomes clearer as your API calls get more complex. Instead of having to specify every little rendering change from the outside, your application can now send only the minimum amount of data and let your cloud-embedded rendering logic figure out how to respond, e.g. what should be displayed and animated over time.)

This works using custom VCS components, which are React components that can directly control aspects of Daily’s cloud media pipeline. We offer an open-source SDK so you can build and test VCS components on your desktop, test them using the VCS Simulator workflow already familiar from rung one, then upload as part of your stream setup over the Daily API.

See our moving watermark tutorial for an example of our to do this.

Make cool stuff happen everywhere

On rung three, the cloud rendering becomes a distributed experience. What if your product needs to render video compositions and graphics not just into a cloud recording or encoded live stream, but also live within different participant/viewer UIs where latency is crucial? Today, the common solution is to build these features in custom front-end code, but this doesn’t scale very well when your video streaming needs get more complex and client platforms multiply. VCS lets you take the code from rung two and run it directly inside client applications. We call this universal rendering. It’s a form of “WYSIWYG” where each participant gets a locally rendered preview that’s an exact match for what happens in the cloud pipeline, but without any of the latency.

This is how VCS becomes more than just a production studio API ported to the cloud. It also lets you build true distributed production tools. As live streaming products are growing more engaging and complex, their production techniques must also grow. The single creator sitting at their computer can’t handle everything themselves anymore. New experiences may require multiple roles coming together to produce a stream: hosts, guests, producers that control specific aspects of the output… All of these people will need their custom UI with an accurate view of the same output.

On the other hand, your customization needs might instead be at the opposite end of the producer-viewer pipeline: not multiple producers but multiple types of viewers, each requiring their own variant of the same rendering. VCS used with Daily’s front-end libraries can handle this too. The combinations ultimately are unique to your product, but with VCS, Daily is now offering the toolkit you need to explore this exciting feature space with a guarantee that whatever you build will also scale up to production.

I don’t want to overpromise. As with every new technology, aspects of this vision remain a work in progress. Today we’re already confident that rungs one and two of the aforedescribed “ladder” are ready for use (even as we’re keeping the “beta” label for now so we can more easily adapt based on feedback). You can start using the VCS baseline composition, easily experiment with its ready-built layout modes and graphics features in the VCS Simulator, and access them over the Daily API in your streams and recordings without having to change anything in how your client app works. You can also build custom VCS components and deploy them into Daily’s cloud-based media pipeline.

The universal rendering of rung three has great promise, but it also means you’d need to change some things about how you build front-ends for your video apps. We’re cognizant that this is a big ask. The open source VCS SDK we’re releasing today includes a “sneak peek” of the VCS client rendering library for web apps, but it’s not production-ready. We’re sharing this code in the open to give you a glimpse of what universal rendering could be, and we invite your feedback and collaboration as we start building out these capabilities across the many client platforms Daily already supports for video calling.

Learn more

Interested in learning more? A great place to start is the VCS reference documentation. I’ve also written a tutorial that shows how to implement an animated video overlay using the VCS SDK.

There are also two tutorials for working with VCS’s baseline composition – the default collection of VCS compositions. These include how to build custom layouts and add image overlays, as well as how to trigger the toast component.

We welcome feedback and questions! You can talk with our developer support via chat or email. Visit our sales form if your team would like to discuss how we work with larger companies (enterprise pricing, SLAs, etc). Thank you!