This post comes in two parts. The first half will explain why I think this topic is both generally interesting and relevant to developers building with WebRTC. There’s a splash of movie history, an analysis of a popular TV format to give you some insight into how these concepts apply in all sorts of productions, and discussion of when your app can benefit the most.

But if you’re in a hurry to get to the technical stuff, that’s perfectly ok! You can go ahead and jump to the section “Selecting and switching in VCS” and dive directly into code examples.

Still with me? Brilliant. Let’s take a very brief detour to the year 1950 and a black comedy classic which is well worth your time even today. I’m talking about “Sunset Boulevard”. The movie ends on this famous line by Norma Desmond, an aging former Hollywood starlet who never quite realized her fame is gone:

“You see, this is my life! It always will be! Nothing else! Just us, and the cameras, and those wonderful people out there in the dark!... All right, Mr. DeMille, I'm ready for my close-up.”

Without giving away the plot, we can analyze what’s funny about her request. Norma is flipping the customary roles of director and actress. Usually during a movie shoot, actors wait patiently in the wings to be called to the set. When they get called, the director chooses the type of shot—close-up or otherwise. Not so with Norma. She’s making herself available on her own time and she’s calling the shots too.

At this point you may be asking: “What does any of this have to do with WebRTC? I don’t recall Cecil B. DeMille directing too many video calls.”

Sure, a WebRTC session doesn’t need DeMillean grand feats of mise en scène, but it still has an aspect of direction:

- Who is available?

- Whose stream is connected where?

- Who is on screen right now?

These choices traditionally have been implicit and managed for end users by a default software implementation. But developers now are conceiving of video experiences in more innovative and flexible ways. If you're building a more advanced video communications app, you may need to think more deeply about these questions. Thinking about participants like actors in various states of readiness can help to clarify the design of your real-time video product or feature.

In this post I’d like to explain the problem space, lay down some necessary terminology, and show how you can make these choices using Daily’s API for both interactive video calls and live streams. (And if eventually you do want to go full DeMille, don’t forget that Daily’s video background replacement API can be used to place your participants in ancient Egypt or biblical Israel. Chewing the scenery with overacting still needs to be supplied at the source, we don’t yet have an AI for that.)

Exploring a familiar TV format

Most people probably know Who Wants to Be a Millionaire, the TV game show format licensed all around the world. (You may also have seen it in the Oscar-winning movie Slumdog Millionaire.)

This kind of game show is interesting to us because it’s nearly real-time in execution and follows a strict template, almost like a computer program. There are multiple participant roles which determine when someone gets on screen. You could think of the show format as a state machine: “If person in role X chooses option C, then we enter state N where we’ll need to bring in a person in role Y to do their thing”… These are the kind of rules we’d be encoding in program code if this game were a WebRTC application.

There are four roles in the show:

- host

- contestant

- “Phone a friend” remote helper

- “Ask the audience” live helper

The last role varies between countries. Sometimes the “Ask the audience” lifeline is implemented as an electronic poll. For this discussion, let’s assume it’s live: an audience member gets to stand up and tell the contestant what they believe is the right answer.

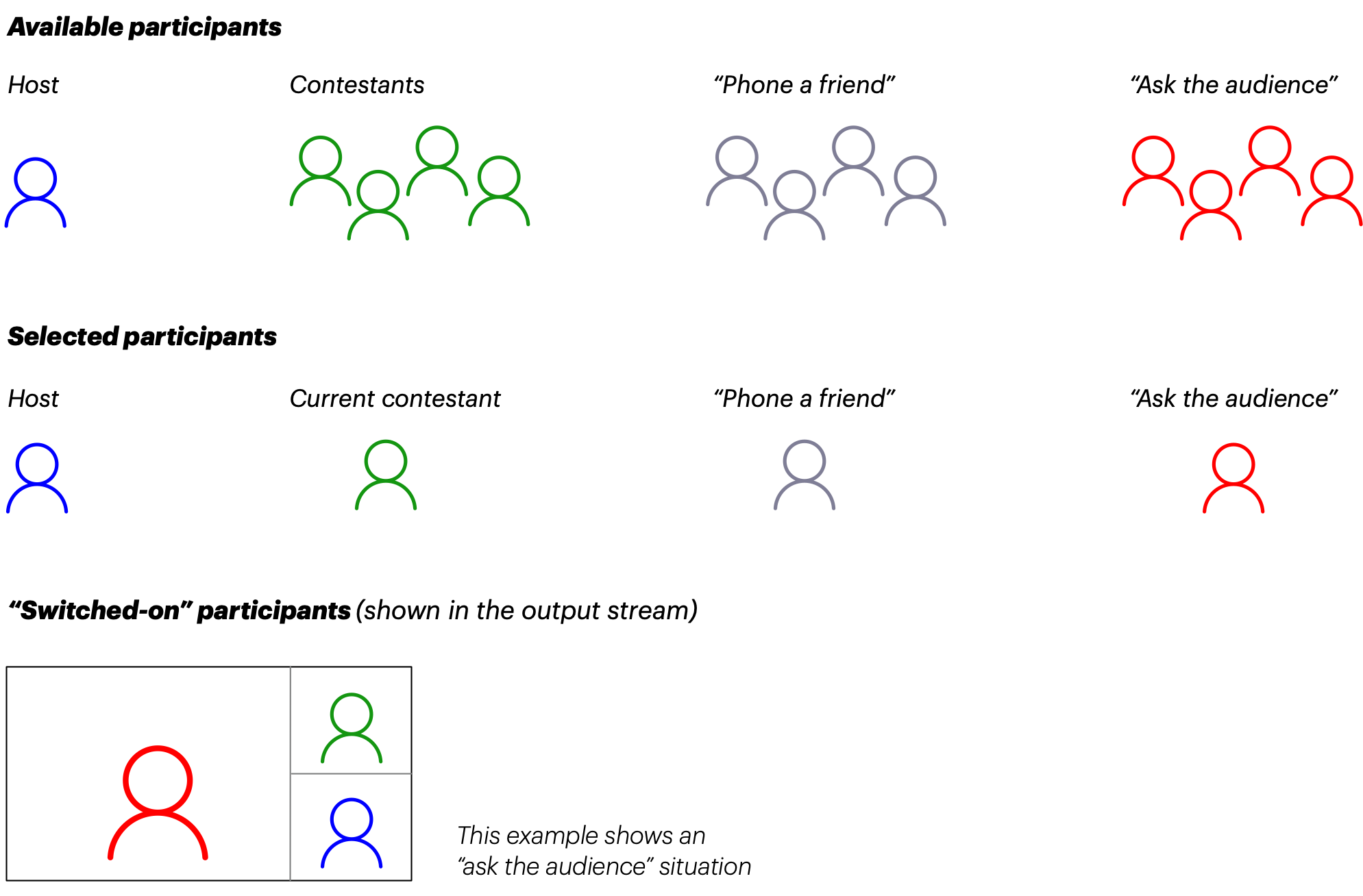

Of the four roles, the host is the only permanent one. There is always a camera and a spotlight pointed at them. The other roles are initially waiting to be called in. They are available but not yet selected. (This also applies to contestants because usually the game starts with six or ten contestants and an initial round of “Fastest Finger First” is used to select who gets to play for money.)

Once the main game starts, the host and one contestant are in their places. They’re now permanently selected. The director can mix their camera streams into the final output stream at any time.

In a TV studio, a hardware device called a switcher is used for this purpose. When a person is on-screen, we can say they’re switched on.

We now have three states a participant can be in:

- available

- selected

- switched-on

This diagram shows the progression of states:

The red audience members are present in the studio, so they’re always available. But they only get selected in the relatively rare case when the contestant uses the “Ask the audience” lifeline. And the selected audience member doesn’t actually appear on-screen until the director is confident that they’re ready to perform and switches them into the mix.

The same kind of production logic exists in most live events and multi-camera TV productions. Consider the Jumbotron display at an American sports event. The audience members are available. When the main event is on hold, the director may ask: “Find someone good-looking wearing a home team shirt.” The camera crew selects that person, then the director switches them on. (A few seconds later the person notices themselves on the big screen and goes crazy.) It’s fundamentally the same flow for everyone that appears on-screen.

Applying the states to WebRTC

The terminology here is a bit challenging because we lack established vocabulary that would apply to both traditional video productions and interactive applications. I’ve chosen to use the words “selected” and “switched-on”, but you might prefer “ready” and “in the mix”, or “connected” and “visible”, or something else. The reason for my choice is that I want to emphasize the timing difference between the activities of selecting and switching:

- Selecting is a preparatory activity. It may take anywhere between a few seconds to several minutes to get a participant ready in traditional media productions.

- Switching is a real-time activity. When you’ve got the streams, you can switch between them in a fraction of a second.

It is the same in a WebRTC session. Selecting means connecting the participant’s audio and video streams to where you are rendering. When you have the streams available, you can switch between them rapidly.

In a basic video call with a handful of participants, we usually don’t need to think about these state transitions. Every participant that becomes available in the room becomes automatically visible to everyone else. Behind the scenes, the client application is selecting new participants as they join and switching them on so their video gets rendered in a box on screen.

As the number of participants on a call increases, it becomes a practical necessity to have some kind of switching logic because everyone can’t fit on the screen at once. A typical default implementation is to do automatic switching based on active speaker status. The application detects the last person to start speaking and broadcasts this metadata. Each client application then switches its rendering so that the active speaker appears first. (This is also what Daily Prebuilt, our hosted video call component, does.)

This approach generally works fine for a collaborative call where everyone’s role is equal. But there are several ways in which your application’s needs may outgrow these automatic defaults:

- The call needs more structure, like an event.

- Consider a corporate virtual offsite. It might include an intro, a few executive presentations, and a Q&A session. This kind of multi-hour event goes more smoothly if it has pre-programmed structure to bring the right speakers in at the right time, for questions to be queued up, etc.

- A “final truth” rendering of your video call needs to be produced. This would be a recording, a traditional live stream of your video call, or both.

- Such a rendering is independent of any individual participant, so you have to explicitly consider its layout and participant selection. I’ve written before on this blog about why recording WebRTC is so hard — check it out for a deep dive into the challenges at hand!

- Your application is what’s called an Interactive Live Stream (ILS).

- Interactive Live Streaming is the video industry's general term for very low-latency streaming to large audiences. To make things a little complicated, what "very low-latency" exactly means depends on the platform. ILS at Daily is built on WebRTC. All participants in the session send and receive the video with real-time latency, under about 200 ms. (We dig into latency, and why we build ILS on WebRTC, in our blog post on Interactive Live Streaming.)

- I'm using Daily's real-time definition of ILS. For topics we're looking at in this post, ILS furthermore is a novel type of (WebRTC-based) application where you have a highly asymmetric balance of available vs. selected participants. You might have a few people — the “talent” or “creators” or whatever your application domain chooses to call them — whose AV streams are distributed to thousands of people viewing at ultra low latency. While everyone in the call can send and receive video at real-time latency, for the use cases I'm discussing here, it’s absolutely crucial to understand which participants are view-only and which ones can become selected and switched into the stream.

- One more note about industry terms: how does ILS differ from “traditional” live streaming, the kind that millions of creators already do on platforms like Twitch and YouTube? The fundamental difference is, again, latency. On traditional streaming platforms it takes anywhere between 5-30 seconds for viewers to receive the video stream. That's because the video is distributed via RTMP/HLS (not WebRTC). With Daily ILS, the latency is similar to a video call, so participants can interact in real time. (Read our post Video Live Streaming: Notes on RTMP, HLS, and WebRTC for more on the evolution of live streaming.)

ILS and event-specific applications are fascinating, but too much to cover in one blog post because it’s mostly about designing your client application. (I hope to return to this topic eventually though! At Daily we’ve been working on a unified rendering solution to make it easier to design this kind of app to span both web and mobile platforms.)

However, the second case — i.e. a recording or traditional live stream — is amenable to a deeper look right now. A recording or live stream on Daily’s platform is a unique case because it uses server-side rendering. There is a very specific API we can use to easily select and switch participants.

Selecting and switching in VCS

VCS is Daily’s Video Component System, currently in beta. You can read more about it in the announcement blog post. In short, VCS is a powerful toolkit for controlling your live stream or recording. It gives you a high degree of control over how the stream or video file gets rendered on Daily’s cloud. This control also includes selecting and switching participants.

So let’s assume you’ve got a live stream or recording running, using Daily’s startRecording()/startLiveStreaming() methods. How do you control which participants are shown in the server-rendered stream?

There are two possibilities (not mutually exclusive). You can:

- Select participants on the room level using the

participantsproperty onupdateRecording()/updateLiveStreaming()methods. - Switch videos on the composition level using params available on the VCS baseline composition.

- These params are all under the

videoSettingsgroup and have either apreferoromitprefix:videoSettings.preferredParticipantIdsvideoSettings.preferScreensharevideoSettings.omitPausedvideoSettings.omitAudioOnlyvideoSettings.omitExtraScreenshares

- You could think of the

prefer*ones as sorting the selected set into display order, and theomit*ones as filtering out specific types. - You’d send these values inside a

composition_paramsproperty on theupdateRecording()/updateLiveStreaming()methods.

- These params are all under the

The difference between these two options is exactly what we’ve been discussing throughout this post (but if you jumped right here from the beginning, don’t worry, this paragraph will explain the essential terminology). The first option lets you specify a list of available participants that you want to select on the server, i.e. make available and ensure the audio and video streams are connected. The second one then lets you switch between the participant streams that you’ve selected. With these tools, you can direct the actions taken by the rendering server quite precisely. We believe this set already covers most applications’ requirements out of the box.

You can read more about these properties, including code examples, on the startRecording() method documentation or its counterpart startLiveStreaming(). These documentation sections are identical because the selection/switch mechanisms are the same regardless of the type of stream output.

Note that you can always do selection and switching in an updateRecording()/updateLiveStreaming() call. Although the documentation is provided under the start methods, these values can always be changed at runtime too. Also if you want to send these updates from your server rather than from a client, you can use Daily’s REST API (next section discusses this option in more detail).

Advanced switching with a VCS cloud function

There’s a limitation to the approach described above. We’re using declarative properties to tell the VCS rendering on the media server which participants should be included and excluded. These commands would typically be sent via the Daily API from the client session which started the live stream or recording session. The client maintains lists of participants and sends a complete update on every switch operation.

You may notice that your application is sending very repetitive data: it’s the same participant IDs again and again on every layout update, just slightly reordered. It would feel more natural if this switching logic could run on the server itself. If the server was capable of making these decisions independently, the client application could be simpler and wouldn’t need to send as much data over the API.

Consider a hypothetical application with the following requirements:

- There are two participant roles: a host and up to three guests.

- When the layout mode is

split, the host should always be on the left-hand side, and the right-hand side should show the guest who is currently the active speaker. - When the layout mode is

dominant, the host should get a large view on the left-hand side, with guests shown as tiles on the right.

The VCS baseline composition already provides these two layout modes (and others too), but the switching logic specified above is non-standard since it depends on the “host” and “guest” role definitions that are unavoidably application-specific.

Is there a way we could make the layout modes “smart” so that they’d automatically pick the right participants to display, even though VCS itself doesn’t know anything about our application’s hosts and guests?

In fact there is. We can override parts of the VCS baseline composition by supplying our own code on the fly. The baseline composition includes a file called preferred-video-ids.js which encapsulates the default switching logic. You can find the code file in the VCS Github repo. If you look at that default implementation, you can see it exports a function called usePreferredParticipantIdsParam() which contains the logic to handle the videoSettings.preferredParticipantIds param discussed above.

By overriding this file with our own version uploaded using the VCS session assets feature, we can implement custom application logic to support the “host” and “guest” concepts on the server.

The application’s call flow could be:

- The host starts the live stream using

startLiveStreaming()with the overridden version of preferred-video-ids.js supplied as a session asset.- At this point there may be guests already available.

- The host client uses application-specific VCS composition params to specify the participant IDs it knows about: its own “host” role, as well as any guests.

- If the guest list changes during the stream (a guest joins or disconnects), the host client will send an update to VCS using the application-specific composition param.

- This is the only case where we need to send participant IDs to the server again.

- When switching layouts between

splitanddominant, the host client will simply send the regularmodeparam available on the VCS composition. Our override ofpreferred-video-ids.jswill do switching automatically on the server.- Note that we’re assuming the host client will always be present in the room. What if it disconnects? Can someone else easily take up the host role in that situation? If you want to make this streaming setup really robust, you could orchestrate the layout changes from your own server using Daily’s REST API, specifically the live-streaming/update endpoint (or its recording counterpart). It works the same as the JS API we’re describing in this article, just with the difference that it’s server-to-server.

The initial method call to start the stream on the host client would look something like this:

call.startLiveStreaming(

// … Endpoint setup omitted for brevity …

layout: {

preset: 'custom',

composition_params: {

‘mode’: 'split’,

‘myApp.hostId’: ‘participant-guid-1’, // a participant ID

‘myApp.guestIds’: ‘participant-guid-2, participant-guid-3’,

},

session_assets: {

'preferred-video-ids.js': 'https://example.com/my-awesome-switching-override.js',

},

}

);

Notice how we’re sending param values for myApp.hostId and myApp.guestIds. Those params clearly don’t appear in the VCS baseline composition’s params documentation, so how do we know they’re valid…? I’m letting you in on a bit of a secret here. The composition_params property is simply a JSON object that gets passed through to VCS on the server. You’re free to send whatever custom param values you want as long as they’re valid JSON (but please use a prefix to prevent name collisions and to identify your app, like “myApp” in this example).

Of course sending data in params isn’t going to do anything useful unless you have custom logic on the server that uses the values somehow. This is where our override of `preferred-video-ids.js` comes into play. Let’s look at the function signature of the default implementation:

export function usePreferredParticipantIdsParam(params, dominantVideoId) {

// … Stuff happens to figure out a list of video IDs …

return { preferredVideoIds, includeOtherVideoIds };

}

The use prefix indicates this function is actually a custom React hook. (Don’t worry if you don’t understand the logic of React hooks, it’s not crucial for this exercise as we can simply follow the model given by the default implementation.)

The function receives an argument called params. These are the current composition param values. We’ll find our application-specific params myApp.hostId and myApp.guestIdsin this object. We’re also getting a dominantVideoId which is provided here as a convenience in case we want to switch based on the dominant input flag, i.e. active speaker.

The function is expected to return an object containing two properties:

preferredVideoIdsis an array of video IDs that should be displayed in the layout.includeOtherVideoIdsis a boolean that tells the VCS baseline composition whether it should also show every other available video input or not.

In our case, we don’t ever want to show anybody but the host and guests, so we’ll be returning false for includeOtherVideoIds.

Now we just need the code to build the preferredVideoIds array. We want to filter the available participants based on the three param values that the host can send, mode, myApp.hostId and myApp.guestIds. We’re also going to use dominantVideoId since our product spec says that in split mode we should show the host and the active speaker if available.

Below is a complete server-side implementation:

export function usePreferredParticipantIdsParam(params, dominantVideoId) {

const { availablePeers } = React.useContext(RoomContext);

const mode = params['mode'];

const hostId = params['myApp.hostId'];

const guestIds = params['myApp.guestIds'];

// The 'useMemo' hook ensures we only do this computation if one

// of the inputs listed in the 2nd argument array have changed.

const preferredVideoIds = React.useMemo(() => {

const videoIds = [];

const hostPeer = availablePeers.find((p) => p.id === hostId);

if (hostPeer) videoIds.push(hostPeer.video.id);

if (mode === 'split' && dominantVideoId) {

// In this mode, show the 'dominant' input (i.e. active speaker) as the second one

videoIds.push(dominantVideoId);

} else {

// In other modes (or if dominant is not set), pass through the guest list

const guestPeers = parseCommaSeparatedList(guestIds).map((guestId) =>

availablePeers.find((p) => p.id === guestId)

);

for (const p of guestPeers) {

if (p) videoIds.push(p.video.id);

}

}

return videoIds;

}, [availablePeers, mode, hostId, guestIds, dominantVideoId]);

return { preferredVideoIds, includeOtherVideoIds: false };

}

Note that I’ve included only the function here for brevity. When overriding the file, you should also copy the header and footer from the original source.

To make this code run on the server, you need to use session assets. You must store your override code file on your own server (can be a Github page or whatever hosting you prefer) and pass a URL reference to it, like this:

call.startLiveStreaming(

// … Other setup omitted for brevity …

session_assets: {

'preferred-video-ids.js': 'https://example.com/my-awesome-switching-override.js',

},

}

);

The file name on your server can be anything as long as it has the .js file extension.

This kind of server-side code override is conceptually like a cloud function. When the streaming/recording session starts, you’re providing a piece of code to be executed in Daily’s rendering cloud. Your code stays resident and gets called upon whenever the VCS composition needs to make a decision about which video inputs to show. This allows us to extend the semantics of the baseline composition with our application’s own concepts like “host” and “guest”.

Summary

We examined real-world examples of TV and event production to get a better understanding of the “available/selected/switched-on” state framework for participants. The timing difference of these state transitions is essential to understanding when to use them: selection is a slower preparatory activity, while switching can happen within a single video frame.

When creating a recording or live stream on Daily, you can use properties available on the updateLiveStreaming() and updateRecording() methods, or their start counterparts, to select and switch participants. (See the section "Selecting Participants" in startRecording() method documentation for details.)

Finally, if you find yourself doing a lot of application-specific switching, you might want to look at a VCS cloud function override that lets you push this logic to Daily’s rendering cloud. This can simplify your client application design and reduce the number of API calls being made.

Remember Norma Desmond? Maybe we can adapt her closing line for this new world:

“All right, Mr. Cloud Function, I'm really ready for my close-up now. Make it automatic.”